Validation is one of the most frequently mentioned skills in Clinical Data Analytics—and one of the least understood.

Many candidates say they “know validation.”

Most hiring managers would disagree.

This edition breaks down what validation actually means in clinical environments, why it carries disproportionate weight in interviews, and why so many candidates fail at it despite strong technical preparation.

What Validation Really Means in Clinical Analytics

Validation is not:

• Running a few checks

• Comparing outputs visually

• Confirming that code executes without errors

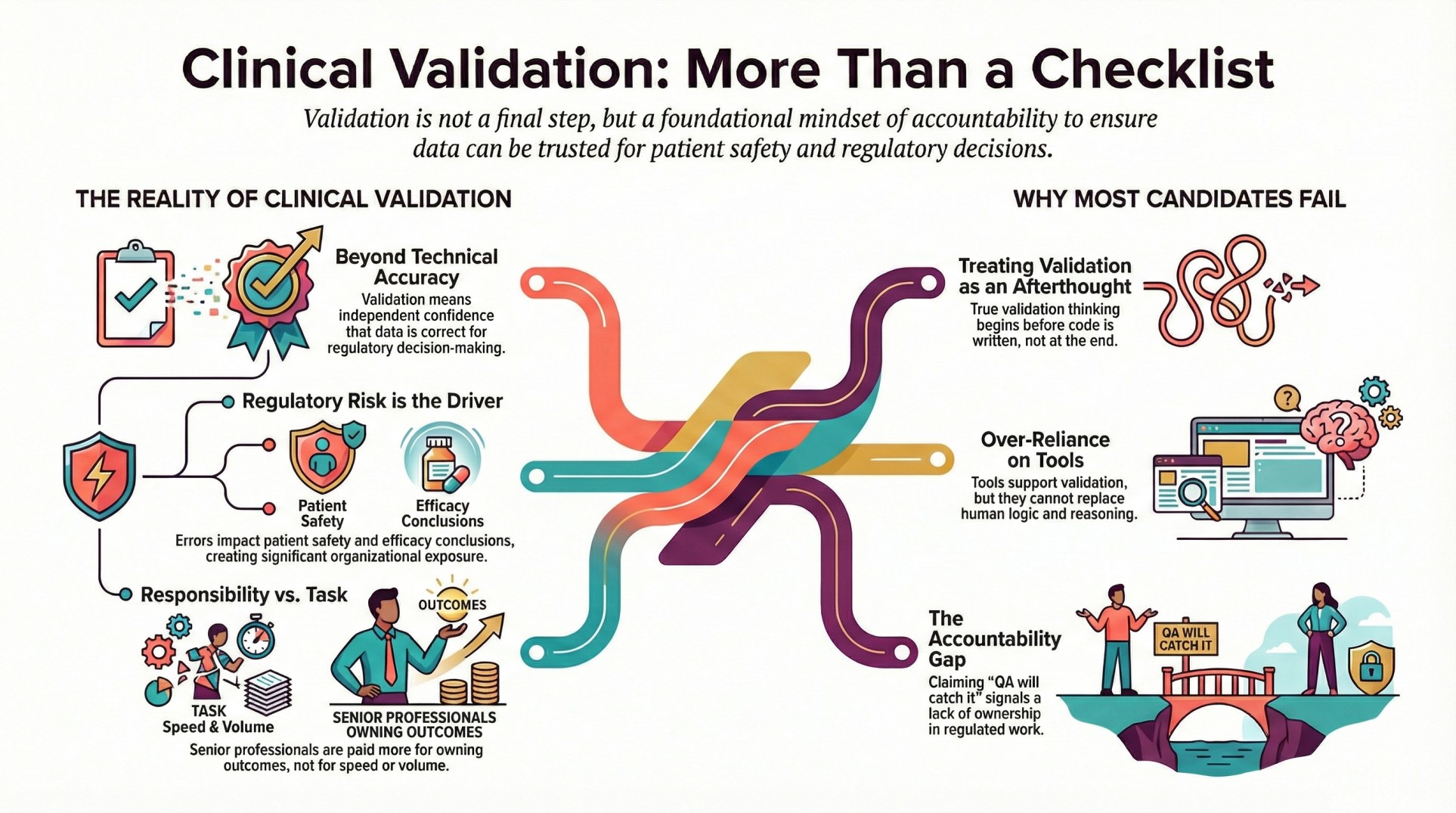

In regulated clinical work, validation means independent confidence in the correctness of data and outputs. It answers one critical question:

Can this data be trusted in a regulatory decision?

That question drives how validation is performed, reviewed, and documented.

Why Validation Carries Regulatory Risk

Clinical trial data directly influences:

• Patient safety assessments

• Efficacy conclusions

• Approval decisions

Errors don’t just cause rework—they create regulatory exposure.

This is why organisations treat validation as a responsibility, not a task. And this is why hiring managers pay close attention to how candidates think about validation during interviews.

How Validation Is Tested in Interviews

Most validation testing is subtle. Hiring managers may ask:

• How would you confirm this derivation is correct?

• What would you do if two outputs don’t match?

• How do you validate your own work before review?

Candidates expecting checklist questions are caught off guard. Interviews are designed to reveal judgement, not memorisation.

The Most Common Validation Failures

Several patterns appear repeatedly:

Treating Validation as an Afterthought

Candidates describe validation as something done “at the end.”

In reality, validation thinking starts before code is written.

Depending Entirely on Tools

Tools support validation. They do not replace reasoning.

Candidates who rely on automated checks without explaining logic appear risky.

Inability to Explain Errors

Validation is not about avoiding errors—it’s about handling them correctly.

Candidates who cannot explain why something went wrong raise red flags.

Avoiding Ownership

Statements like “QA will catch it” signal lack of accountability.

In regulated work, everyone owns quality.

Why Senior Roles Are Paid More

Salary growth in Clinical Data Analytics is not driven by speed or volume of work.

It is driven by:

• Ownership of validation outcomes

• Responsibility for data integrity

• Ability to sign off confidently

Senior professionals are paid more because regulatory risk sits with them.

The Gap Between Training and Reality

Many training programs teach:

• What validation is

• Which checks to run

Few teach:

• How to think during validation

• How to question assumptions

• How to explain decisions under review

This gap is where most candidates struggle when they move from learning environments into real teams.

Why Validation Defines Career Stability

Validation-focused professionals tend to:

• Be trusted earlier

• Receive responsibility faster

• Remain relevant longer

In regulated industries, trust compounds over time.

Closing Thought

Validation is not a step in the workflow.

It is a mindset.

Candidates who understand this move forward.

Those who don’t often stall—regardless of how strong their technical skills appear on paper.