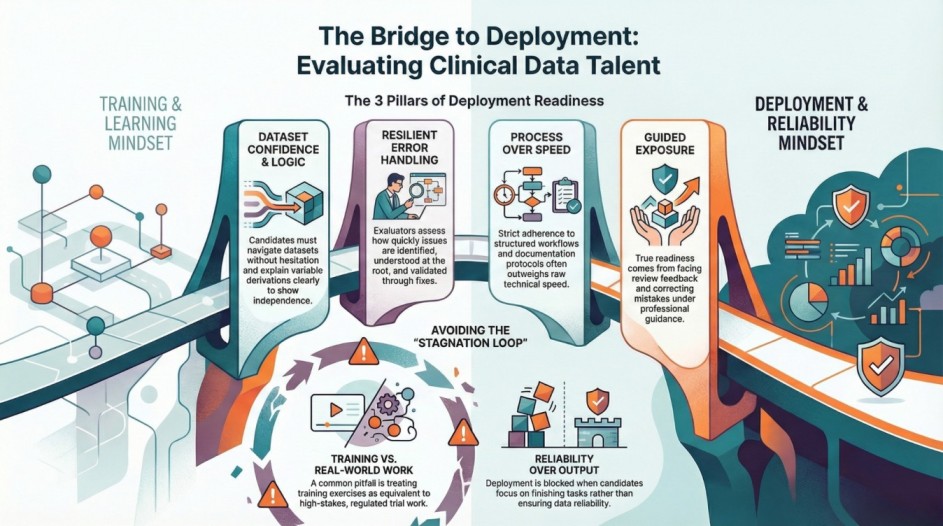

There is a wide gap between learning Clinical Data Analytics and being deployed into a real clinical team. Many candidates complete training successfully, yet never reach deployment. Others move faster—even with less formal learning.

The difference lies in how talent is evaluated after training, not during it.

This edition explains what happens between “course completion” and “deployment”, and why that gap decides most outcomes.

Why Completion Does Not Equal Deployment

Training answers one question:

Can the candidate learn the material?

Deployment answers a different one:

Can the candidate be trusted in a live, regulated environment?

Most hiring decisions are delayed or rejected at this second question.

How Talent Is Evaluated After Training

Once training ends, evaluation becomes practical and behaviour-driven.

1. Dataset Confidence

Evaluators look for whether a candidate can:

- Navigate datasets without hesitation

- Explain variable purpose clearly

- Trace derivations logically

Uncertainty here signals dependency—and teams avoid dependency in regulated work.

2. Error Handling

Errors are expected. How candidates respond to them matters more.

Evaluators assess:

- How quickly issues are identified

- Whether root causes are understood

- How fixes are validated

Candidates who panic or deflect responsibility rarely progress.

3. Explanation Clarity

Deployment-ready professionals can explain:

- What they did

- Why they did it

- What assumptions were made

Clear explanations reduce review cycles and risk.

4. Process Discipline

Clinical analytics runs on process.

Evaluators notice whether candidates:

- Follow structured workflows

- Maintain documentation

- Respect review protocols

Strong process discipline often outweighs raw technical speed.

Why Some Trained Candidates Never Get Deployed

Several patterns commonly block deployment:

- Treating training exercises as equivalent to trial work

- Needing constant guidance for basic decisions

- Assuming validation is someone else’s responsibility

- Focusing on output instead of reliability

These behaviours raise risk concerns—especially in regulatory contexts.

Guided Exposure vs Self-Learning

Self-learning builds familiarity.

Guided exposure builds judgement.

Deployment-ready talent usually has:

- Worked through real-world scenarios

- Faced review feedback

- Learned to correct mistakes under guidance

This experience changes how candidates think—not just what they know.

Why Organisations Prefer Structured Pipelines

Many teams now prefer structured talent pipelines over ad-hoc hiring because pipelines:

- Reduce onboarding risk

- Improve consistency

- Shorten deployment timelines

This shift reflects a broader move toward reliability over speed.

What This Means for Career Progression

Professionals who reach deployment:

- Gain responsibility faster

- Build trust earlier

- Progress more steadily

Those who remain in learning loops often stagnate despite strong credentials.

Closing Thought

Learning builds capability.

Deployment builds confidence—in both the professional and the organisation.

Understanding how talent is evaluated after training helps candidates prepare realistically and helps employers hire responsibly.